That opens up the possibility of using deep learning with multispectral images. The previous restriction on the number of channels in a convolutional neural network has been relaxed. The cell in the bottom right of the plot shows the overall accuracy. These metrics are often called the recall (or true positive rate) and false negative rate, respectively. The row at the bottom of the plot shows the percentages of all the examples belonging to each class that are correctly and incorrectly classified. These metrics are often called the precision (or positive predictive value) and false discovery rate, respectively. The column on the far right of the plot shows the percentages of all the examples predicted to belong to each class that are correctly and incorrectly classified. Both the number of observations and the percentage of the total number of observations are shown in each cell. The off-diagonal cells correspond to incorrectly classified observations. The diagonal cells correspond to observations that are correctly classified. The rows correspond to the predicted class (Output Class) and the columns correspond to the true class (Target Class). Use the new plotconfusion function to show what's happening with your categorical classifications. You can also introduce gradient clipping, which can help keep the training stable in the face of rapid increase in gradients. When you train a network, now you can select the Adams solver or the RMSProp solver.

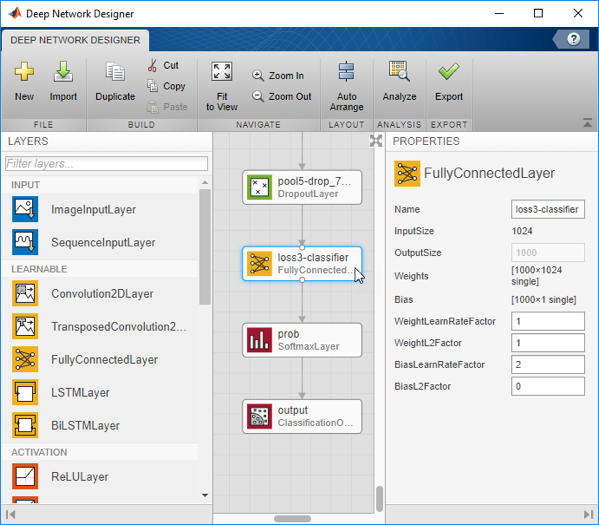

The new function bilstmLayer creates an RNN layer that can learn bidirectional long-term dependencies between time steps.

The doc example "Sequence-to-Sequence Regression Using Deep Learning" shows the estimation of engine's remaining useful life (RUL), formulated as a regression problem using an LSTM network. Regression problems, bidirectional layers with LSTM networks I'll focus mostly on what's in the Neural Network Toolbox, with also some mention of the Image Processing Toolbox and the Parallel Computing Toolbox. In this post, I'll summarize the other new capabilities. I showed one new capability, visualizing activations in DAG networks, in my 26-March-2018 post. As usual (lately, at least), there are many new capabilities related to deep learning. MathWorks shipped our R2018a release last month.